Most AI initiatives don’t fail because of the model. They fail because of poor system design, weak workflows, fragmented context, and lack of coordination at scale.

Introduction

AI investment is accelerating across industries, yet the same pattern keeps repeating: pilots show promise, but progress stalls when it’s time to scale.

A chatbot performs well in a demo.

A workflow automation use case gets attention.

A few internal tasks become faster.

On the surface, it looks like progress.

But early traction is not the same as scalable execution.

Once AI moves into real operations, the cracks start to show.

Outputs become inconsistent.

Edge cases increase.

Teams stop trusting the system.

Manual work starts creeping back in.

What looked efficient in a controlled environment starts breaking under real business complexity.

At that point, the model usually gets blamed.

Usually, that’s not the real issue.

Contact us

Start Your Innovation Journey Here

Why AI Strategy Often Focuses on the Wrong Problem

A lot of AI strategy still revolves around one question:

“Which model should we use?”

It sounds important, but in most real-world deployments, the model is rarely the main limitation.

Once a model reaches a reasonable level of performance, the bigger question becomes:

Can AI operate reliably inside the complexity of your business?

That’s where most AI implementation challenges begin.

Across organizations, there is a familiar pattern:

Heavy investment goes into:

- Model selection

- AI tools and platforms

- Experiments and pilots

Minimal investment goes into:

- System design

- Data structure

- Workflow architecture

- Cross-system coordination

This imbalance is one of the biggest reasons why AI projects fail to scale.

AI usually doesn’t fail because it lacks intelligence.

It fails because it is placed inside systems that were never designed to support it.

Why Early AI Wins Often Don’t Hold Up

The first phase of AI adoption is usually encouraging.

Organizations often see quick wins such as:

- A chatbot reducing repetitive support queries

- Teams using AI for research or documentation

- Internal workflows getting partially automated

These early results create momentum.

But as AI usage expands, the cracks start to show.

What begins to happen:

- Outputs become less consistent

- Exceptions and edge cases increase

- Teams start second-guessing AI decisions

- Human review becomes more frequent

This is where many companies start looking for a “better model.”

But in most cases, the problem is structural.

What worked in a controlled pilot often fails under the messiness of real operations.

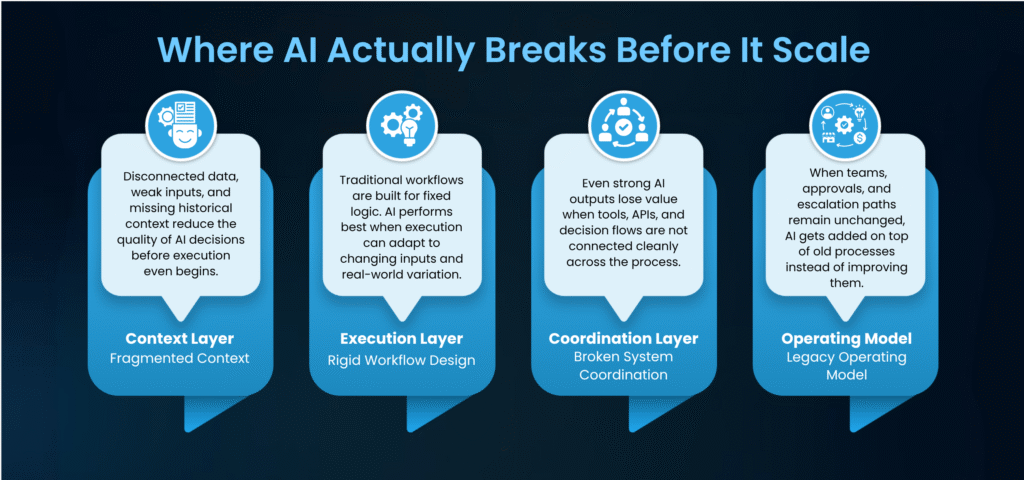

The Four Layers Where AI Actually Breaks

AI rarely breaks because of one dramatic issue.

It usually breaks because the surrounding system is weak across four critical layers.

- Context Layer: The Missing Foundation

Data may exist across the business, but it is often fragmented across systems, teams, and tools.

What is usually missing:

- Connected context across systems

- Clean, structured inputs

- Historical continuity

- Business-specific relevance

Without this, AI can still generate outputs but those outputs tend to feel:

- Generic

- Shallow

- Inconsistent

More data alone doesn’t solve this.

Better context does.

If AI cannot see the full operational picture, it cannot perform consistently.

- Execution Layer: Where Rigid Workflows Limit AI

Traditional automation is built around fixed logic.

AI is different.

It works best when it can:

- interpret inputs dynamically

- handle ambiguity

- adjust decisions based on context

The problem is that many organizations try to force AI into rigid workflow structures built for older automation systems.

When that happens:

- AI loses flexibility

- It struggles with exceptions

- It becomes unreliable under variation

This is one of the biggest differences between using AI and building systems that can execute with AI.

- Coordination Layer: The Invisible Failure Point

In production, they usually involve a network of:

- Models

- Data sources

- Workflow logic

- Human decision points

That means AI success depends not just on output quality, but on how well all these components work together.

When coordination is weak:

- Information gets lost between steps

- Tasks don’t complete cleanly

- Teams are forced to manually fill in gaps

For example, AI may classify a customer issue correctly but if it cannot trigger the right action across CRM, ticketing, and escalation systems, the process still fails.

This is often the difference between a system that works in a demo and one that works at scale.

- Operating Model: Where People and AI Fall Out of Sync

AI doesn’t just change outputs.

It changes how work moves.

But many organizations try to deploy AI while keeping the surrounding operating model unchanged.

They keep the same:

- approval flows

- team structures

- escalation patterns

- process assumptions

Then they add AI on top and expect efficiency to appear automatically.

It usually doesn’t.

What happens instead:

- Teams override AI too often

- Trust remains low

- Adoption stays shallow

Technology shifts without operational shifts rarely deliver meaningful results.

A Real-World Pattern

Consider a mid-size e-commerce company trying to scale customer support with AI.

What worked initially

They deployed an LLM-powered support assistant connected to FAQs and help documentation.

Early results looked strong.

The system reduced simple query load and handled repetitive requests well.

What changed under real usage

As support interactions became more complex, performance declined.

Customer queries increasingly required:

- order history

- past conversations

- channel continuity

- system-level decision logic

At that point:

- responses became inconsistent

- escalations increased

- support teams stopped relying on the system

What was actually wrong

The problem wasn’t the model.

The real bottleneck was structural:

- Customer data was spread across disconnected systems

- There was no unified context across CRM, tickets, and communication channels

- There was no clear flow from query → decision → resolution

- The system had no feedback loop to improve over time

What changed

Instead of replacing the model, the company redesigned the system around it.

They focused on:

- creating a unified context layer

- designing agent-driven workflows

- improving coordination across systems

- building continuous feedback loops

Business impact

The result was meaningful:

- 60% reduction in manual tickets

- More consistent responses

- Higher team confidence

- Clearer ROI

The breakthrough came from redesigning the system not upgrading the AI.

What Separates Scalable AI from Experiments

The organizations that scale AI successfully focus on more than tools.

They focus on:

- Systems, not just models

- Context, not just data

- Workflow flow, not just isolated tasks

- Coordination, not just output

- Operational alignment, not just adoption

They understand that AI is not just a feature.

It is an operational capability.

That is what separates experimentation from real execution.

Closing Perspective

AI is often framed as a breakthrough in intelligence.

And it is.

But in practice, its business impact is constrained by something much less advanced:

The quality of the surrounding system

Until that changes:

- Better models won’t fix inconsistency

- More tools won’t create leverage

- More pilots won’t lead to scale

The organizations that move ahead will not simply be the ones experimenting the most.

They will be the ones removing the structural friction that prevents AI from working in real conditions.

That is where real AI scale begins.

Contact Us

As AI adoption grows, the real challenge is not the model it is building the system around it. Businesses that improve context, workflows, and coordination are better positioned to scale AI successfully.

If you are looking to move beyond pilots and build AI systems that deliver measurable business value, contact us to collaborate with specialists in AI-driven workflow and system design.

From strategy to delivery, we are here to make sure that your business endeavor succeeds.

Whether you’re launching a new product, scaling your operations, or solving a complex challenge Hoop Konsulting brings the expertise, agility, and commitment to turn your vision into reality. Let’s build something impactful, together.

Free up your time to focus on growing your business with cost effective AI solutions!